Old-fashioned readability scores and indices don’t account for some of the most critical elements of online content presentation. Avoid using them as the only measure of the true readability of online content.

The story of a story

“Go Dogs. Go!” I love this book. Well, a version of this book. (The small one.)

Several popular children’s books from publisher Random House are available in two physical formats: the standard hardcover book and the board book. Each format has its pros and cons.

- Hardcover book. More, thinner pages for more content. But, thinner pages tear easier.

- Board book. Fewer, thicker pages means less content. Super durable.

The more / less content situation wouldn’t be an issue if you were writing a book from scratch.

Instead, publishers are most likely taking their more popular, large-format titles through an editing and re-versioning process. In the end, they can introduce a more durable version to another audience: page-chewing babies.

Getting to the point

This content editing process HAS to be complicated, from an editorial point-of-view. Imagine the task of conveying the familiar storyline in one-sixth the number of words.

In the case of “Go, Dog. Go!”, the longer, hardcover version was trimmed from 528 words to only 70 words in the shorter, board book version. Each one of those 70 words matters, believe me. (I’ve read it hundreds of times.)

Sure, there are fewer words. But, fewer words doesn’t always make a message or story clearer and easier to read.

Testing the readability of “Go, Dog. Go!”

Readability tests are equations using syllables, word counts, sentence counts, and familiar-word lists. Most often, they produce a number that corresponds with a grade level.

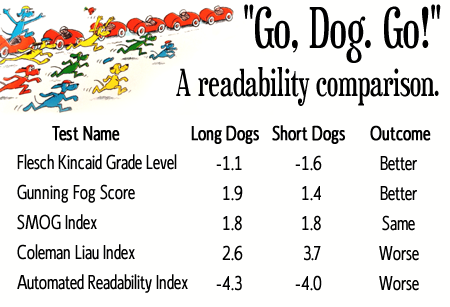

From a readability metric standpoint, you might think that such a dramatic reduction in content (the 528 versus 70 words) would make the story easier to digest. Based on five of the major readability tests, results were mixed.

Two tests showed improvements in readability in the shorter version, one saw no change, and two others came back with less favorable scores:

While the goal of the publisher, editor, and author is fitting the same, beloved storyline into fewer pages, another, more-interesting thing starts to happen: a brutal distillation. Only the essential remains.

The shorter version of “Go, Dog. Go!” sheds some of the more superfluous elements of the story. Gone are the hat-disapproving dog, unrelated sub-plots, and unnecessary filler. What we are left with is pure magic. Seriously, you need to read this short version. I still love reading it.

Why we test content readability

Some of us might test content JUST FOR FUN. Others are tasked with the duty of measuring how consumable the content is by some score or index. The duty of measuring readabilty does not end there. We want to:

- Determine if current content meets existing standards

- Establish a baseline readability score

- Set goals and standards for future content production

- Measure edited, re-vamped, and re-organized versions of the same content

How we’ve tested content readability in the past

The major readability tests were constructed in the 1940s to deal with printed texts. These equation-based systems improved the texts of the day: newspapers, books, etc. Familiar-word lists were refined, and other adjustments were made. Things were set for some time.

Research completed in the following decades took into account one of the foundational concepts for clear and concise online writing: structure. However, it proved impossible to fold structure-based variables into a traditional readability equation. Text needs are much more complex than could have ever been anticipated.

Today, a quick search online will produce several sites that offer options to measure content via cut-and-paste or by entering a page URL. Simple, quick, and easy. Right? NOT SO FAST.

This has an impact on online content

As we approach online content itself from a messaging and editorial standpoint, we encounter similar issues as seen in the children’s book example. Adjusting text content can have a positive or negative impact, depending on the test. The worst-case scenario has people adjusting text to game the tests into delivering favorable scores.

No current readability test accounts for the hallmarks of easily-consumed online content:

- Concise sentences free of filler words

- Bullets

- Subheads

- Emphasis (bold, H1, H2, etc.)

- Shorter, easily-read paragraphs

Add a few more variables into the equation by considering responsive design implications, device capabilities, and context, and soon the notion of an agreed-upon readability score is RIGHT OUT.

We are creating content destined for literally thousands of different environments. Even if we had a measure that took into account how that content was displayed now, it would by out of date in a week.

Don’t measure readability only with a number

Content strategists are often asked how we might measure the effectiveness of our efforts. Sometimes we can point to cost savings, favorable return on key performance indicators, or other data-based measures.

Other times, our value is best presented in refinements to the overall user experience. Which can be tough to measure with a series of numbers.

It’s hard to resist. You might be begging for an easy, scalable, plug-and-play solution for your online content readability measurement needs. Instead, you might wish to make a checklist of sorts, from a messaging point-of-view:

- Does this page/element stick to a single message overall?

- Does the content avoid jargon and language inappropriate to the audience ?

- Are the ideas presented in a clear, logical fashion from a user perspective?

- Does the page have a clear objective (and call-to-action, if appropriate)?

- Can users scan and quickly understand what they will get from the page?

Readability scores are not completely without merit. I still use them for passages and paragraphs, when appropriate. But they simply cannot be used for measuring an entire page’s readability.

In the meantime, go find the board book version of “Go, Dog. Go!” at your library. (I didn’t even let any story spoilers slip. The ending is AWESOME.)